-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

TOTW: Google's Project Ara Modular Phone May Be The Future Of SmartphonesOctober 30, 2014

TOTW: Google's Project Ara Modular Phone May Be The Future Of SmartphonesOctober 30, 2014 -

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

Speculations

The Promise Of Augmented Reality

08 years

Just two sessions into Augmented Reality World 2016, and the incredible feeling of excitement about the industry had already set in. In a talk entitled The Butterfly Dream: Smart Eyewear in 2031, Dan Eisenhardt discussed the future of AR and the Internet of Things, and it’s no surprise that at a AR/VR/IoT conference the perspective on these technologies is optimistic. Eisenhardt addressed a concern shared by many augmented reality skeptics: will people really wear things on their head all the time?

Basically, this entire conference is a testament to the fact that plenty of large corporations are saying yes. In his talk, Eisenhardt argued that, just as with the book, the wristwatch, and the smartphone, any technology that alters our intake of information, and especially those that alter our appearance even slightly, could suffer backlash. (yes, even books had some backlash, people saying kids were spending too much time inside reading). But just as with the books, the wristwatch, and the smartphone, the utility of these technologies ultimately overcomes these concerns. All the numbers have been pointing in that direction: incredible growth for the last 5 or so years, the colossal projected potential in the industry, and more. But aside from that, to zero in on AR in particular, the potential use-cases are mind-blowing. Walk into a clothing store and a large arrow appears in your vision, identifying clothes in your size? Within a few years, definitely. Texting, calling, searching, helpful information, all showing up in your vision in an unobtrusive and simple way? Almost already here.

While we’re still some distance from having AR glasses look identical to standard prescription glasses, we’re on our way. Even since last year, the glasses being showcased today are smaller, faster, lighter, and have higher-quality displays. The experiences you can already have with headsets like HoloLens and ODG R7 are quite amazing, and it’s only natural that by 2031, fifteen years from now, the best use-cases will have had plenty of time to become mainstream. Eisenhardt went as far as to say the question will not be “should I get AR glasses?” in 2031 but “when will I upgrade my current AR glasses?” In other words, AR will be as ubiquitous as smartphones are today – you can’t leave home without them. I can’t quite decide if that’s scary or exciting.

Sergey Brin, co-founder of Google, wearing the (for the most part) failed Google Glass, the first mainstream AR headset.

I’m a technological optimist; I feel that AR, VR, and other technologies will substantially change the world as we know it. And I expect to jump on the bandwagon as soon as the prices become more competitive. Nevertheless, the notion that our current reality might be replaced with one that is constantly augmented by computer-generated graphics is slightly unsettling. I like reality as it is, and while I trust AR can make our lives easier and more productive, just as smartphones have, I can’t help but wonder whether being completely plugged into an augmented, even completely simulated world with VR would take a certain realness out of life. A realness I enjoy.

Something about AR glasses, wearing computers on our faces, poses a large change to that lifestyle, that reality. Eisenhardt talked about how reality is subjective anyway, how my reality may be different from yours, how easy it is to alter our current perspective of reality, and how holding onto a non-augmented reality is rejecting a technology just because you want to protect something that didn’t really exist in the first place. If you’re fully immersed in a simulation, a VR/AR experience, such that you feel like you’re in another world, move around like you’re in another world, hear, smell, touch, and taste like you’re in another world, who’s to say you’re not in another world?

This is, of course, speculation. By 2031, I would expect AR to be at least something you use on a weekly basis, if not daily. By then, AR would be no different than opening up a laptop, checking your smartphone, or even reading a book. The idea of our current, unaltered, unaugmented reality is slowly being broken down, each new technological advances making our reality seem more and more mutable. AR is very exciting; I’ve had the chance to demo some pretty incredible experiences here at AWE 2016, from beating up a robot and petting a dog with Midas Touch Games, to making music with Subpac, to exploring a prehistoric world of dinosaurs with LifeLiQe, all in AR and VR. And while the novelty of these experiences will soon fade away, they will be replaced by very practical and helpful uses of the technology. While it may be scary, and certainly is exciting, there’s no doubt AR will be a large player in the future of entertainment, enterprise, and more.

The Almost Impossible Ethical Dilemma Behind Autonomous Cars

08 years

You’re driving down the road in your Toyota Camry one morning on your way to work. You’ve been driving for 15 years now and pride yourself on the fact that you’ve never had a single accident. And you have to drive a lot, too; every morning you commute an hour up to San Francisco to your office. You pull into a two-lane street lined on both sides with suburban housing, and suddenly realize you took a wrong turn. You quickly look down at your smartphone, which is running Google Maps, to find a new route to the highway. When you look back up, you’re surprised to see a group of 5 people, 3 adults and 2 kids, have unknowingly walked into your path. By the time you or the group notice each other it’s too late to hit the break or for the pedestrians to run out of the way. Your only option to save the 5 people from being injured, or even killed, by your car is to swerve out of the way… right into the path of a woman walking her child in a stroller. You notice all of this in the half a second it takes you to close the distance between you and the group to only 3-4 yards.

You now have but milliseconds to decide what path to take. What do you do? But more to the point of this article, what would an autonomous car do?

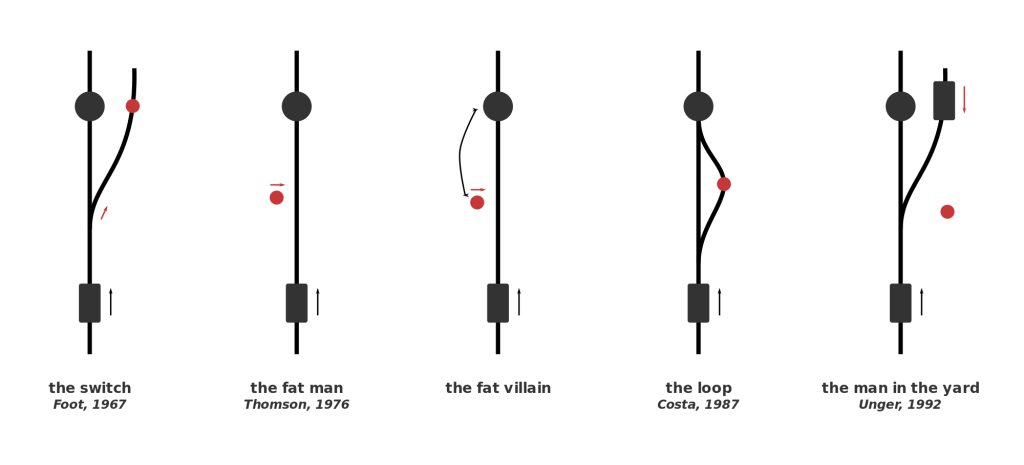

That narrative is a variant of the classic situation known as the Trolley Problem. The Trolley Problem has many variations, some more famous than others, but all of them follow the same general storyline: you must choose between accidentally killing 5 people (e.g., hitting them with your car) or purposefully making an action (e.g., swerving out of the way) that kills one person. This type of situation is obviously one that no one wants to find themselves in, and is so unlikely that most people avoid it their entire life. But in the slim cases where this situation occurs, the split-second decision a human makes will vary from person to person and from situation to situation.

But no matter the outcome of the tragic event, if it does end up happening, the end result will be generally be the fault of a distracted driver. What will happen, though, when this decision is completely in the hands of an algorithm, as it will be when autonomous cars ubiquitously roam the streets years from now. Every new day autonomous cars become more and more something of the present rather than the future, and that leaves many worried. Driving has been ingrained in us for century, and for many, giving that control up to a computer will be frightening. This is despite the fact that in the years that autonomous cars have been on the roads, their safety record has been excellent, with only 14 accidents and no serious injuries. While 14 may seem like a lot, keep in mind that each and every incident was actually the result of human error by another car, many of which were the result of distracted driving.

I’d say that people are more worried about situations like the Trolley Problem, rather than the safety of the car itself, when driving in an autonomous car. Autonomous cars are just motorized vehicles driven by algorithms, or intricate math equations that can be written to make decisions. When an algorithm written to make a car change lanes and parallel park has to make almost ethically impossible decisions, choosing between just letting 5 people die or purposely killing 1 person, we can’t really predict what it would do. That’s why autonomous car makers can’t just let this problem go, and have to delve into the realm of philosophy and make an ethics setting in their algorithms.

This won’t be an easy task, and will require everyone, from the car makers to the customers, thinking about what split-second decision they would make, so they can then program the cars to do the same. This ethics setting would have to work in all situations; for instance, what would it do if instead of 5 people versus one person, it was a small child versus hitting an oncoming car? One suggested solution would be to have adjustable ethics setting, where the customer gets to choose whether they would put their own life over a child’s, or to kill one person over letting 5 people die, etc. This would redirect the blame back to the consumer, giving him or her control over such ethical choices. Still, that kind of a decision, which very well could determine fate of you and some random strangers, is one that nobody wants to make. I certainly couldn’t get out of bed and drive to work knowing that a decision I made could kill someone, and I’d bet I’m not alone on that one. In fact, people may even avoid purchasing an autonomous car with an adjustable ethics setting just because they don’t want to make that decision or live with the consequences.

So what do we do? Nobody seems to want to make kind of decisions, even though it is absolutely necessary. Jean-Francois Bonnefon, at the Toulouse School of Economics in France, and his colleagues conducted a study that may help us all with coming up with an acceptable ethics setting. Bonnefon’s logic was that people will be most happy with driving a car that has an ethics setting close to what they believed is a good setting, so he tried to gauge public opinion. By asking several hundred workers at Amazon’s Mechanical Turks artificial intelligence lab a series of questions regarding the Trolley Problem and autonomous cars, he came up with a general public opinion of the dilemma: minimize losses. In all circumstances, choose the option in which the least amount of people are injured or killed; a sort of utilitarian autonomous car, as Bunnefon describes it. But, with continued questioning, Bunnefon came to this conclusion:

“[Participants] were not as confident that autonomous vehicles would be programmed that way in reality—and for a good reason: they actually wished others to cruise in utilitarian autonomous vehicles, more than they wanted to buy utilitarian autonomous vehicles themselves.”

Essentially, people would like other people to drive these utilitarian cars, but less enthusiastic about driving one themselves. Logically, this is a sensible conclusion. We all know that we should make the right decision and sacrifice your life over that of someone younger, like a child, or a group of 3 or 4 people, but when it comes down to it only the bravest among us are willing to do so. While these scenarios are far and few between, the decisions made by the algorithm in that sliver or a second could be the difference between the death of an unlucky passenger or an even more unlucky passerby. This “ethics setting” dilemma is a problem that can’t just be delegated to the engineers at Tesla or Google or BMW; it has to be one that we all think about, and make a collective decision for that will hopefully make the future of transportation a little more morally bearable.

Why Other Companies Should Follow Alphabet’s Lead

09 years

For as long as it has existed, the public has identified the Google brand with the ridiculously popular search engine under the same name. Since 1998, Google search has grown exponentially while staying pretty much the same, yet the company Google has expanded aggressively into fields well beyond search, both through acquisitions such as YouTube and Nest, and organically via the company’s extensive R&D initiatives such as Google Glass and the company’s autonomous car. Still, this has all fallen under the good old multi-billion dollar umbrella of Google. That all changed last week. Not that initiatives inside Google have fundamentally changed; the profit driver continues to be search and advertising, with many long-term prospects hoping to flower eventually. Nevertheless, the restructuring of management lines arguably has dramatic long-term implications, in my opinion for the better.

In a nutshell, Google essentially created a holding company called Alphabet that owns Google and many smaller companies that Google has acquired/created, such as Nest and Calico (Google’s longevity initiative). Alphabet is now a portfolio of enterprises managed by founders Larry Page and Sergey Brin, many with distinct CEOs who have a fair amount of independence. This shift came as a shock to everyone outside of Google (and likely many inside the company), sounding more like one of the company’s classic April Fool’s jokes than a typical corporate maneuver. Renaming a company with one of the top business brands in the world? Insane in many respects, not to mention handing the CEO of “Google Classic” to another executive, Sundar Pichai.

So why is this a good idea, and why should other companies consider following Google’s lead? It really comes down to what they are trying to accomplish as a company. In the announcement letter that you can read at abc.xyz, they wrote the following, which helps explain their reasoning behind the change:

“As Sergey and I wrote in the original founders letter 11 years ago, ‘Google is not a conventional company. We do not intend to become one.’

Alphabet’s initiatives are far-flung and have the potential much more than Google’s traditional “cash cow” businesses to change the world. It’s hard not to see some of Alphabet’s initiatives becoming wildly successful and ultimately spinning out into independent, and large, public companies. For instance, I previously mentioned Calico as one of the companies Alphabet is keeping under it’s wing. Calico is a scientific research and technology company; their ambitious goal is to research and eventually create ways to elongate life and let people live healthier. That is a goal, although ambitious, if reached with the help of Alphabet could very well change the world in a major way.

What really excites me about Alphabet is that they’re doing precisely what I would do with all that money and resources: create and finance projects that will change the future. Google the search engine has become a very conventional business in the Internet age, but Google the company aspires to much more that just rolling in the cash and adding its existing product lines (I hate to say it, but I’m looking at you, Apple). In an age of tech titans, companies such as Google, Facebook, Amazon, Apple, and Microsoft are all angling to stake their claim to the future. And under Alphabet, Google aims to establish a leading innovation platform by “letting many flowers bloom.” Rather than sticking to a couple odd ventures and mainly staying a conventional company, Alphabet lets Google expand into a business set on creating a better future. And if this change in corporate structure will help facilitate that, then I say go for it.

If you want to read the original Alphabet announcement letter, click HERE.

Augmented Vs. Virtual Part 2 – Augmented Reality

09 years

Reality is very personalized, it is how we perceive the world around us, and it shapes our existence. And while individual experiences vary widely, for as long as humans have existed, the nature of our realities have been broadly relatable from person to person. My reality is, for the most part, at least explainable in terms of your reality. Yet as technology grows better and more widespread, we are coming closer to an era where my reality, at least for a period of time, may be completely inexplicable in the terms of your reality. There are two main ways to do this: virtual reality and augmented reality. In virtual reality, technology immerses you in a different, separate world. My earlier article on VR was the first of this two-part series, and can be found HERE.

Whereas virtual reality aims to totally replace our reality in a new vision, augmented reality does what the name suggests: it augments, changes, or adds on to our current, natural reality. This can be done in a wide variety of ways, the most popular currently being a close-to-eye translucent screen with projected graphics on top of what you are seeing. This screen can take up your whole field of view, or just in the corner of your vision. Usually, the graphics or words displayed on the screen is not completely opaque, since it would then be blocking your view of your real reality. Augmented reality is intrinsically designed to work in tandem with your current reality, while VR dispenses it in favor of a new one.

With this more conservative approach, augmented reality (AR) likely has greater near-term potential. For VR, creating a new world to inhabit limits many of your possibilities to the realm of entertainment and education. AR, however, has a practically unlimited range of use cases, from gaming to IT to cooking to, well, pretty much any activity. Augmented reality is not limited to, but for now works best as a portable heads-up display, a display that shows helpful situational information. For instance, there was a demo at Epson’s booth at Augmented World Expo 2015 where you got to experience a driving assistance app for AR. In my opinion, the hardware held back the software in that case, as the small field of view was distracting and the glasses were bulky, but you could tell the idea has some potential. At AWE, industrial use cases as well as consumer use cases were also prominently displayed, which included instructional IT assistance, such as remotely assisted repair (e.g., in a power plant, using remote visuals and audio to help fix a broken part).

Before I go on, I have to mention one product: Google Glass. No AR article is complete without mentioning the Google product, the first AR product to make a splash in the popular media. Yet not long after Google Glass was released, it started faded out of the public’s eye. Obvious reasons included the high price, the very odd look, and the social novelty: people couldn’t think of ways they would use it. Plus, with the many legal and trust issues that went along with using the device, it often just didn’t seem worth the trouble. Yet rumor has it that Google is working on a new, upgraded version of the device, and it may make a comeback, but in my opinion it’s too socially intrusive and new to gain significant near-term social traction.

Although many new AR headsets are in the works (most importantly Microsoft’s HoloLens), the development pace is lagging VR, which is already to the stage where developers are focused on enhancing current design models, as I discussed in the previous VR article. For AR, the situation is slightly different. Hardware developers still have to figure out how to create a cheap AR headset, but a headset that also has a full field of view, is relatively small, doesn’t obstruct your view when not in use, and other complications like that. In other words, the hardware of AR occasionally interrupts the consumption of AR content, while for VR hardware, the technology is well on its way to overcoming that particular obstacle.

Beyond these near-term obstacles, if we want to get really speculative, there could be a time when VR will surpass AR even in pure utility. This could occur when we are able to create a whole world, or many worlds, to be experienced in VR, and we decide that we like these worlds better. When the immersion becomes advanced enough to pass for reality, that’s when we will abandon AR, or at least over time use it less and less. Science fiction has pondered this idea, and from what I’ve read, most stories go along the lines of people just spending most of their time in the virtual world and sidelining reality. The possibilities are endless in a world made completely from the fabric of our imagination, whereas in our current reality we have a lot of restrictions to what we can do and achieve. Most likely, this will be in a long, long time, so we have nothing to worry about for now.

Altogether, augmented reality and virtual reality both are innovative and exciting technologies and that have tremendous potential to be useful. On one side, AR will be most likely used more than VR in the coming years for practical purposes, since it’s grounded in reality. On the other hand, VR will be mostly used for entertainment, until we hit a situation like what I mentioned above. It’s hard to pit these two technologies against each other, since they both have their pros and cons, and it really just depends on which tech sounds most exciting to you. Nonetheless, both AR and VR are worth lots of attention and hype, as they will both surely change our world forever, for better or worse.

The Simulation Argument Part #2 – The Hypothesis Explained

09 years

This is the second article in a two-part FFtech series on The Simulation Argument. If you haven’t already read the first article go HERE before reading the following.

In the previous FFtech article on the Simulation Argument, we established that Bostrom’s statement that the first proposition is false is a reasonable assumption. Just to remind you, these are the propositions, and one has to be true:

#1. Civilizations inevitably go extinct before reaching “technological maturity,” the time at which civilization can create a simulation complex enough to simulate conscious human beings. Meaning: no simulations.

#2. Civilizations can reach technological maturity, but those who do have no interest in creating a simulation that houses a world full of conscious humans. Meaning: no simulations. Not even one.

#3. We are almost certainly living in a simulation.

Now, on to the second postulate. Since that we have decided that there are quite likely alien civilizations in the universe that have developed an ability to create “ancestor simulations”, as Bostrom likes to call them, the second postulate says that the alien civilizations just have to interest in creating a simulation of fully conscious human beings. Most likely, a civilization creating a simulation of how humans lived before they reached “technological maturity” will be future humans, as it is less likely that we will have met an alien civilization before develop the capacity to create an ancestor simulation of our own, as the distance from another habitable stars is simply too far away.

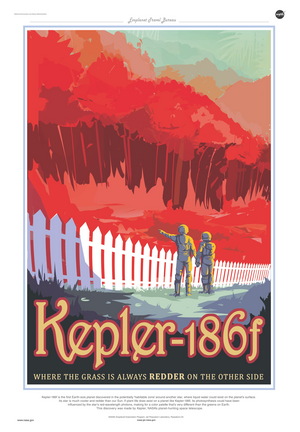

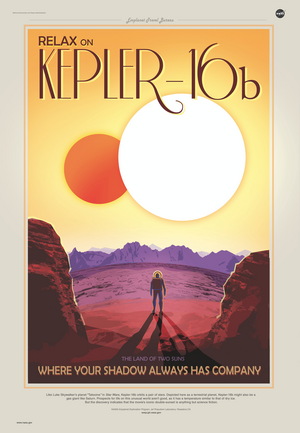

(ABOVE: Some very cool illustrations of hypothetical travel ads for habitable planets found with the Kepler satellite)

However the simulation is created, it seems much more likely that at least one alien starts a simulation. If current trends continue, such as the a definitive interest in our ancestors shown in the multitude of historical studies, there will be plenty of people who would like to simulate how their ancestors lived. I know that I would find a simulation of a Greek town fascinating, for example. The idea that not a single person would want to create a simulation seems unlikely, and so therefore the second postulate is likely false.

So, Bostrom’s first postulate is probably false, and the second postulate is just plain unlikely by human (and, more arguably, alien) nature. Based off of that, do we now live in a simulation? Well, not yet. Just because and ancestor simulation exists doesn’t mean that you’re living in it. First, you have to consider the virtual “birth rate” of these simulations. Bostrom also supposes that it takes a lot more effort and time to create a real human than it would a virtual one once a sufficiently advanced technology is developed. Therefore, the ancestor simulation (or simulations) could have many orders of magnitude more virtual humans living inside it then are actual humans, living outside the computer and controlling the simulation. So, if there are thirty virtual humans for every real human, or even numbers up to 1,000 virtual humans to every human, that means the probability that you are one of the few “real” humans rather than a simulated human is very low.

And that, my friends, is the simulation hypothesis.

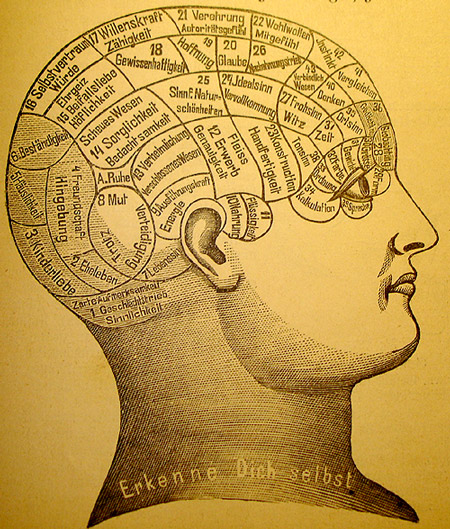

Of course, there are many assumptions made here, some clear and others subtle, some of which could be used to attack Bostrom’s argument. For instance, one of the major assumptions that Bostrom makes is what he calls “Substrate Independence”. Substrate Independence is the idea that a working, conscious brain can, as he writes in his original paper,

” …supervene on any of a broad class of physical substrates. Provided a system implements the right sort of computational structures and processes, it can be associated with conscious experiences. It is not an essential property of consciousness that it is implemented on carbon-based biological neural networks inside a cranium: silicon-based processors inside a computer could in principle do the trick as well.

Basically, Substrate Independence is the idea that consciousness can take many forms, only one of which is carbon-based biological neural networks. This form of Substrate Independence is pretty hard grasp, which is why Bostrom argues that the full form of Substrate Independence isn’t actually needed for an ancestor simulation. Really, the only form of Substrate Independence needed to create an ancestor simulation is a computer program running well enough to pass the Turing Test with flying colors.

Besides Substrate Independence, most of the rest of the Simulation Argument is fairly simple. Since we have already deduced that there is likely to be at least one ancestor simulation in existence, the likelihood that we are living in that simulation is pretty high. The logic behind this is that the ancestor simulation doesn’t have a set birth rate that can’t be manipulated. The simulation could have as many simulated people in it as they want – though this is stated as obvious, when it is also debatable — and there would be many orders of magnitude more simulated people than actual human people not in a simulation, and even more if there are multiple simulations running at the same time. The present-day parallel is to online video games MMORPGs, which are constantly getting bigger and bigger, with many more characters made in those games than real humans born every second.

Obviously, this argument is very speculative. Substrate Independence, ancestor simulations, the whole thing; it just all seems too far-fetched to be true. And, after all, Bostrom isn’t a computer scientist, he’s works at the Faculty of Philosophy in Oxford. But, that doesn’t mean his argument is false by nature, as in his original paper he goes into incredible detail about computing power, Substrate Independence, and even creates a mathematical formula for calculating the probability we live in a simulation. In fact, if we want to get technical, Bostrom categorizes what I have told you so far as the Simulation Hypothesis, and the full Simulation Argument being the probability equation Bostrom created, some “empirical” facts, and relation to unrefutable philosophical principles. If you want to read Bostrom brilliant albeit a little wordy paper, click HERE.

To sum it up: a man named Nick Bostrom created a series of logical “propositions” that, when examined closely, seem to suggest that there is a very high probability that we are living in a simulation. In fact, the probability is so high that to close his paper, Bostrom writes:

“Unless we are now living in a simulation, our descendants will almost certainly never run an ancestor simulation.”

You may take knowing this however you want. Personally, I think the simulation argument is one of the coolest things to ever come out of philosophy. And if it’s true, that we live in a simulation, that only makes it cooler. After all, we will never know for sure whether we live in a simulation or not, and either way, it doesn’t affect your life the slightest. You have no choice but to continue living your life as you did, maybe in a simulation, maybe not. All this shows is that as technology continues to develop at a rapid pace, we are getting closer and closer to even the wildest of science fiction technologies to become a reality.

Sources: https://www.youtube.com/watch?v=nnl6nY8YKHs http://www.simulation-argument.com/simulation.htmlThe Simulation Argument Part #1 – The First Proposition

09 years

This is the first article in FFtech’s series on The Simulation Argument. Enjoy, and check back for the following articles in the upcoming weeks.

*Warning – the following is incredibly speculative.*

Life seems real, right? This sounds like an obvious statement – of course life is real. That’s what life is. We are living, breathing humans, going about our daily lives, playing our part in the grand theater of life.

Or so we think.

Ok ok, I’ll stop being dramatic. This isn’t what you think; I’m not going to tell you that you’re a reincarnation of a turtle, or that you’re a ghost or spirit. But what I’m going to propose may seem even more preposterous. Brace for it. Ready? Using logical steps, some are arguing that they can prove the likelihood that we are living purely in a simulation is very high.

Whaaaat?? How could we possibly be living in a simulation, with just a bunch of code constituting our entire existence? This idea, famously popularized in the film The Matrix, now has a rigorous scientific argument, created by Nick Bostrom. For Bostrom however, instead of humans being controlled by aliens in a sci-fi thriller, the Simulation Argument conducts a sequence of logical steps in an effort to demonstrate that there is a greater chance than we might think that we are living in a simulation. The argument Bostom put together has three propositions, one of which must be true:

#1. Civilizations inevitably go extinct before reaching “technological maturity,” the time at which civilization can create a simulation complex enough to simulate conscious human beings. Meaning: no simulations.

#2. Civilizations can reach technological maturity, but those who do have no interest in creating a simulation that houses a world full of conscious humans. Meaning: no simulations. Not even one.

#3. We are almost certainly living in a simulation.

Hold it right there, you may be saying. It’s obvious that the first one it true, meaning we couldn’t be able to be in a simulation. That statement, that the first postulate is false, seems logical, but when put through a bunch of philosophical hurdles, doesn’t hold water. The reason that #1 is probably wrong is that the likelihood of sophisticated alien civilizations existing is actually quite high. Although beyond the scope of this article, the vast number of habitable planets, including planets outside of the Habitable Zone but that still may harbor life, is extremely large. Somewhere in the universe, intelligent life must have evolved. Once we have accepted that conclusion, it becomes less likely that this stage of development is unreachable by any one of these civilizations. From an evolutionary perspective, we as humans on Earth are arguably not too far away from being able to simulate a full chemical human mind, and within say 100-1,000 years we may have developed such a simulation similar to the one Bostom is hypothesizing.

One of the main principles of science, as the great Carl Sagan says in his quote The Pale Blue Dot, is the fact that humans on Earth aren’t special, chosen to be the singular life form in the universe. The Milky Way isn’t special at all – quite ordinary among galaxies in fact. If every other alien civilization becomes extinct within 100-1,000 years of our level of technological development, it would certainly make us one very special species. It seems much more likely that at least one civilization, whether it is future humans ourselves or an alien species, develops to this advanced level of sophistication. Thus after some considerable mental wrestling, the first postulate is deemed by Bostom to be most likely false.

But, as even Bostom himself admitted, we don’t have fully sufficient evidence against any of the first two arguments to completely rule them out. There are plenty of theories that favor a hypothetical “Great Sieve”, some event that will happen to every advanced civilization in the universe that drives them to extinction, and that will do the same to us once we reach that stage. Maybe it will be a technology that, once discovered, causes every civilization to ultimately destroy themselves. (e.g., genetic manipulation, nuclear power and weapons, bio-engineering diseases, etc.) The Great Sieve has also been used as an explanation for why there haven’t already detected some type of alien life forms, but the jury is still out on that one. Whatever the Great Sieve may actually be, we still don’t have our complete confidence in saying that the first postulate is 100% wrong. But, in the absence of any conclusive argument for a “Great Sieve”, we will for now follow along with Bostom and say the first postulate should be false.

This is the first big step in the Simulation Argument. The rest of the argument is built upon the idea that alien civilizations could actually develop and use ancestor simulations. This isn’t a small step to make, and it draws in many other philosophical complications, for instance, is creating a conscious computer program as easy as replicating a human brain in code, or is there more to it? I’ll get into this and more in the next installment of FFtech’s Simulation Argument series, so check back soon.

Sources: https://www.youtube.com/watch?v=nnl6nY8YKHs http://www.simulation-argument.com/simulation.html

Are All Animals Doomed For Extinction? Part 4 – Is There Hope?

09 years

This is the final article in FFtech’s De-extinction and Conservation Tech series. To read the first article, go HERE, to read the second article, go HERE, and you can find our most recent article HERE.

So, is there hope for the animals of Earth? That’s the million dollar question. Are an ever-growing number of species ultimately doomed to extinction? While this question may be impossible to answer yet, it is certainly an outcome we all hope to avoid.

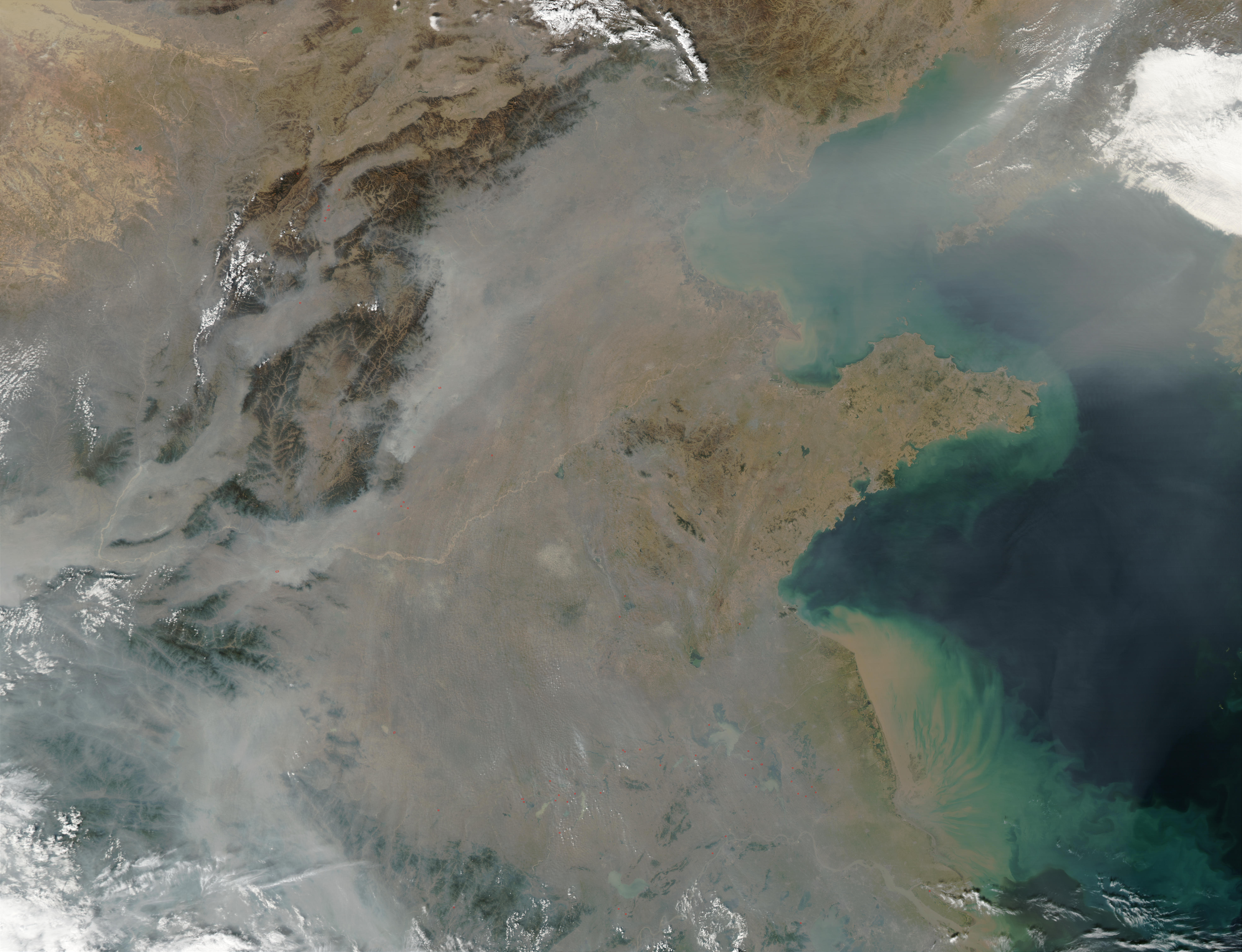

In the present, we can do some things to slow the biggest contributors to species extinction such as deforestation and poaching. Efforts are underway in battlegrounds such as the Amazon rainforest to lower the heartless logging of the forests. So much forest is cut down that every second, roughly 36 football fields of trees are destroyed, homes for countless animals, plants, insects, fungi and more amazing life. Also, many efforts to stop poaching of rhinos and elephants in Africa and Asia are taking place, targeting not only the poachers themselves but also the incredibly damaging market for their tusks and horns in China and other countries, leveraging the star-power of local celebrities to amplify the message. Tons of others are chipping in, and yet the trend is still not significantly changing.v

Who am I to say that we’re doomed, though? People have miraculously came together to do great things before, and I’m sure they can do that again for causes like these as well. After all, our animals are what differentiates Earth from some other floating rock out in the Milky Way.

But just stopping this one problem won’t be enough. Overpopulation will drive people into the habitats of more animals. Global warming will continue to melt the ice caps, not only destroying the home of many Arctic and Antarctic species, but causing rising sea levels that threaten to drown countless other animals living near coastlines across the world. Not only do the problems directly relating to animals hurt the chances of the general survival of the Earthen fauna, but other, directly human-caused problems do too. If we are going to be saved by some miracle technology that stops global warming, and the global population levels out, so be it. To be honest, I highly doubt that will happen. At least for many of these problems, humanity will have to exercise its altruistic muscles, and see if we can fix what wrongs the rise of homo sapiens has brought upon the natural world. As interesting as I find the fields cosmology and astronomy, at least an equal amount of eyes and money should be spent studying the fragile ecosystems of the great world we live on, rather than already looking forward to abandoning this planet for another one that is probably not as unique and fascinating as the one we already live on.

“Look again at that dot. That’s here. That’s home. That’s us. On it everyone you love, everyone you know, everyone you ever heard of, every human being who ever was, lived out their lives. The aggregate of our joy and suffering, thousands of confident religions, ideologies, and economic doctrines, every hunter and forager, every hero and coward, every creator and destroyer of civilization, every king and peasant, every young couple in love, every mother and father, hopeful child, inventor and explorer, every teacher of morals, every corrupt politician, every “superstar,” every “supreme leader,” every saint and sinner in the history of our species lived there-on a mote of dust suspended in a sunbeam.

The Earth is a very small stage in a vast cosmic arena. Think of the endless cruelties visited by the inhabitants of one corner of this pixel on the scarcely distinguishable inhabitants of some other corner, how frequent their misunderstandings, how eager they are to kill one another, how fervent their hatreds. Think of the rivers of blood spilled by all those generals and emperors so that, in glory and triumph, they could become the momentary masters of a fraction of a dot.

Our posturings, our imagined self-importance, the delusion that we have some privileged position in the Universe, are challenged by this point of pale light. Our planet is a lonely speck in the great enveloping cosmic dark. In our obscurity, in all this vastness, there is no hint that help will come from elsewhere to save us from ourselves.

The Earth is the only world known so far to harbor life. There is nowhere else, at least in the near future, to which our species could migrate. Visit, yes. Settle, not yet. Like it or not, for the moment the Earth is where we make our stand.

It has been said that astronomy is a humbling and character-building experience. There is perhaps no better demonstration of the folly of human conceits than this distant image of our tiny world. To me, it underscores our responsibility to deal more kindly with one another, and to preserve and cherish the pale blue dot, the only home we’ve ever known.”

– Carl Sagan

Thanks for sticking with me through this whole conversational journey! If you want to check out the first, second and third articles in the series, go HERE.

Sources: http://www.biologicaldiversity.org/programs/biodiversity/elements_of_biodiversity/extinction_crisis/ http://wwf.panda.org/about_our_earth/biodiversity/biodiversity/ http://www.nature.com/news/2011/110823/full/news.2011.498.htmlAre All Animals Doomed For Extinction? Part 3 – Noah’s DNA Ark

09 years

This is the third installment in Fast Forward’s De-extinction & Conservation tech series. For the first article, click HERE, and to read the second article, go HERE.

What happens if we can’t stop the demise of a rising share of Earth’s species? What if, in a worst-case scenario, we actually can’t halt the extinctions? While clearly an extreme case scenario, if the conservation techniques discussed in the previous articles in this series fail, this outcome starts to become worthy of contemplation. Most likely, habitat destruction would be the cause of accelerating extinctions, and with fewer habitable ecosystems, utilizing frozen tissue samples (see second article) to relocate populations to new locales may become one of our only options. This is clearly speculative, but as animals all over the world are losing their homes by the day, it may not be as far off as we hope.

An alternative hope could be rapidly developing technologies such as 3D printing. With a strong library of species DNA, we could potentially “3D-print animals” to populate whatever space we find for them. Problems are many, including technical obstacles as well as the lack of adequate DNA samples to restore balanced ecosystems. That’s where, and I’m surprised I have to say this, the Russians come in.

Russia has granted Moscow State University their second biggest scientific grant ever on a project called “Noah’s Ark”, which is essentially a giant databank consisting of DNA from every single living and near-extinct species. That is a heck of a big job, but apparently the Russians are ready to take it head on. “It will enable us to cryogenically freeze and store various cellular materials, which can then reproduce. It will also contain information systems. Not everything needs to be kept in a petri dish,” said MSU rector Viktor Sadivnichy.

The physical building designed to house the DNA library is set to be completed in 2018, with its gigantic size reflecting the magnitude of the task at hand. The university’s incredible task could take decades: there are estimated to be 8.7 million species, with an estimated 86% of land species and 91% of all marine species yet to be discovered. At the current rate of field taxonomy, we would only have discovered every species on Earth in more than 400 years. So even if the scientists can manage to sample a good majority of the species out there that we have found, they will have a long way to go before taking a full backup of Earth’s genetic data.

This is the third installment in the four-part series on De-Extinction & Conservation tech. Check back here soon for the last article in the series!

Sources: http://rt.com/news/217747-noah-ark-russia-biological/ http://www.nature.com/news/2011/110823/full/news.2011.498.html http://www.nydailynews.com/news/world/russia-build-noah-ark-world-dna-databank-article-1.2059704

Are All Animals Doomed to Extinction? Part 2: The Frozen Zoo

09 years

This is the second in my series on de-extinction technology, the increasing rate of animal extinction, and more. Click HERE to read the first article.

To start, I’ll tell you the first of humanity’s hopes for the survival of Earth’s fauna: a genetic sampling collection, or in the words of the San Diego Zoo, a “Frozen Zoo.” The reason I mentioned Angalifu, the late white rhino from the San Diego Zoo, in Part 1 of this series is because the rhino is now part of an incredible task being undertaken: creating a frozen collection of any animal that dies at the Zoo, including tissue, sperm/eggs, stem cells, and anything else that may be useful for later use. They have been doing this for 40 years already, and have amassed so much genetic data that the Zoo now represents the largest frozen gene bank in the world, including rare samples such as the white rhino’s.

This technique holds great promise, one of which is cloning or bringing a species back to life; however, creating one or two of these rhinos or Po’ouli birds isn’t what scientists are trying to achieve. The goal is rather to get these animals back into the wild as part of a stable population. But, when the animals that are “created” are the only ones that can repopulate the whole rhino species, that can lead to genetic complications, such as inbreeding. Yet many other species have come back from a very small population, so if we can someday produce a large handful of genetically distinct white rhinos (i.e., not clones of one or two individuals) then there is a chance that they could grow their population back up to a stable point in the wild.

Even after this major technical hurdle is cleared, another key obstacle would be to avoid the poaching that prompted the fall of this great species in the first place, but that’s a story for another day. Yet for now, poaching does raise a new point, and one that hurts the case for all of the Jurassic Park fans who want pet velociraptors (or perhaps more realistically, pet Dodo birds): where would we put these animals, and how would they get re-introduced into the modern ecosystem that has already adapted to life without them?

The short answer is: they can’t really. After an ecosystem has gone so long without a certain species, reintroducing the species back to the wild risks disrupts the new harmony, and may even make the reintroduced species act as an invasive species. As our goal is to keep our environment as harmonic as possible, disrupting that just because Velociraptor African safaris make great business hopefully won’t happen anytime soon.

What I’m trying to say is that the goal of all this time and money invested in this cause is not to bring back extinct species, but rather to bring back extant species that are hovering on the brink of extinction, such as the white rhino. The species the scientists are targeting have only just vacated their natural habitats, preferably due to unfortunate human eradication, as those are the species where we have a clearer moral obligation to try to intercede. Bringing these species back to a stable population, which has been completed before with various raptor species and others, is the goal of all this hard work from many talented people.

Make sure to check back here soon for the last two installments in this series!

Are All Animals On Earth Doomed For Extinction? – Part 1

09 years

Back in December, at the San Diego Zoo, a 44-year-old white rhinoceros named Angalifu died. Angalifu was one of the last five white rhinoceroses in the world, and unfortunately, each of the remaining four is unable to reproduce. What does that mean? Well, for now it means that the white rhinoceros is doomed to extinction, at least in its original state.

Angalifu, the late White Rhino.

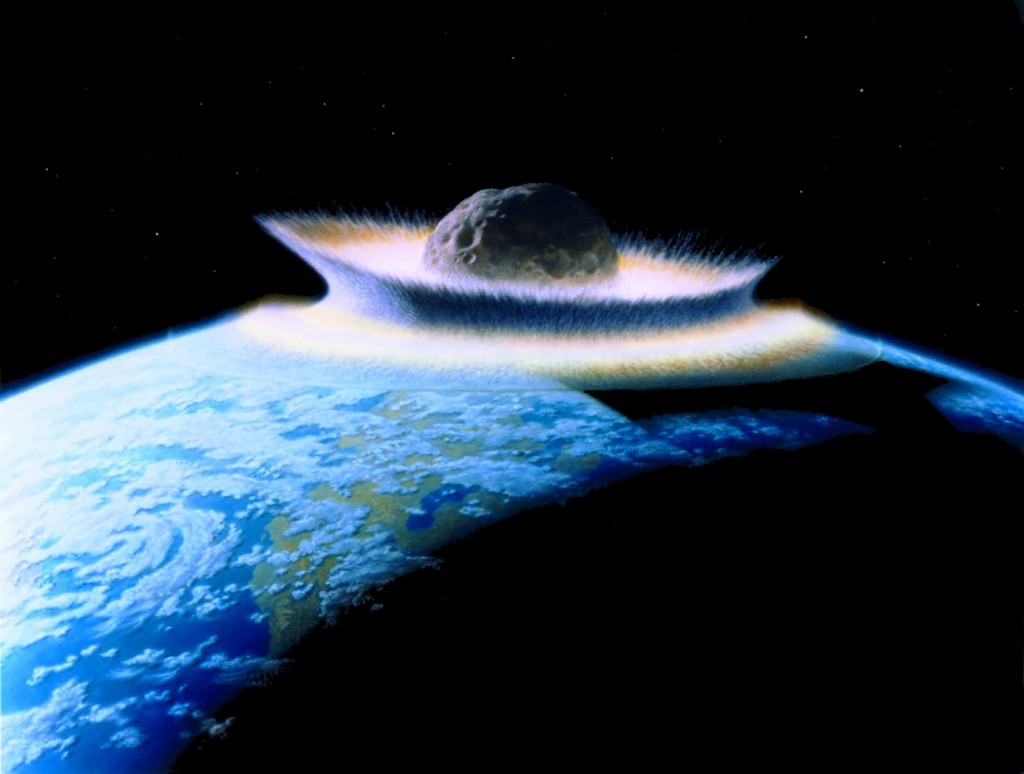

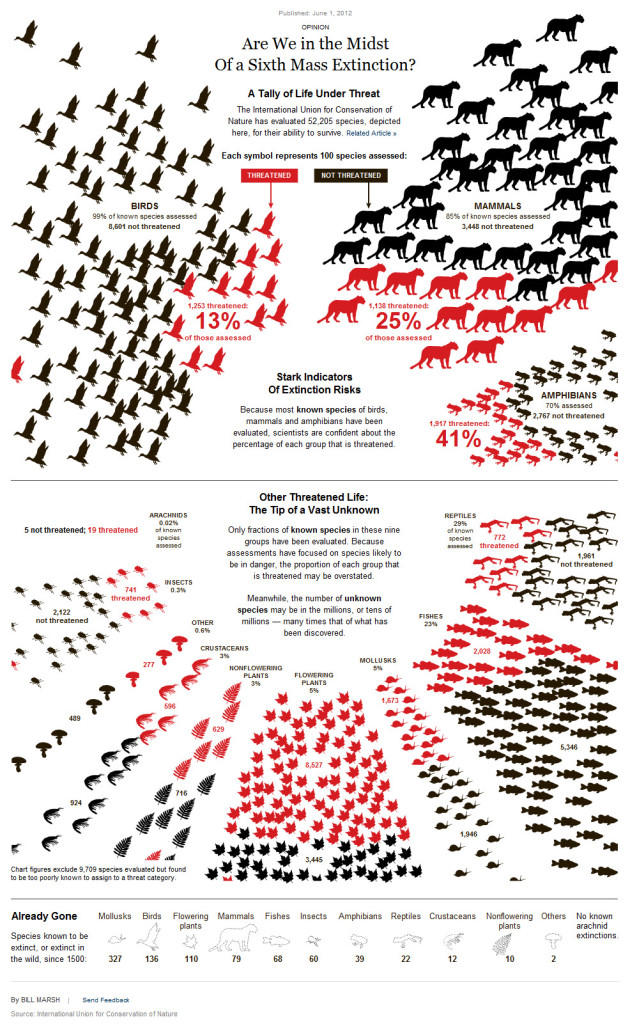

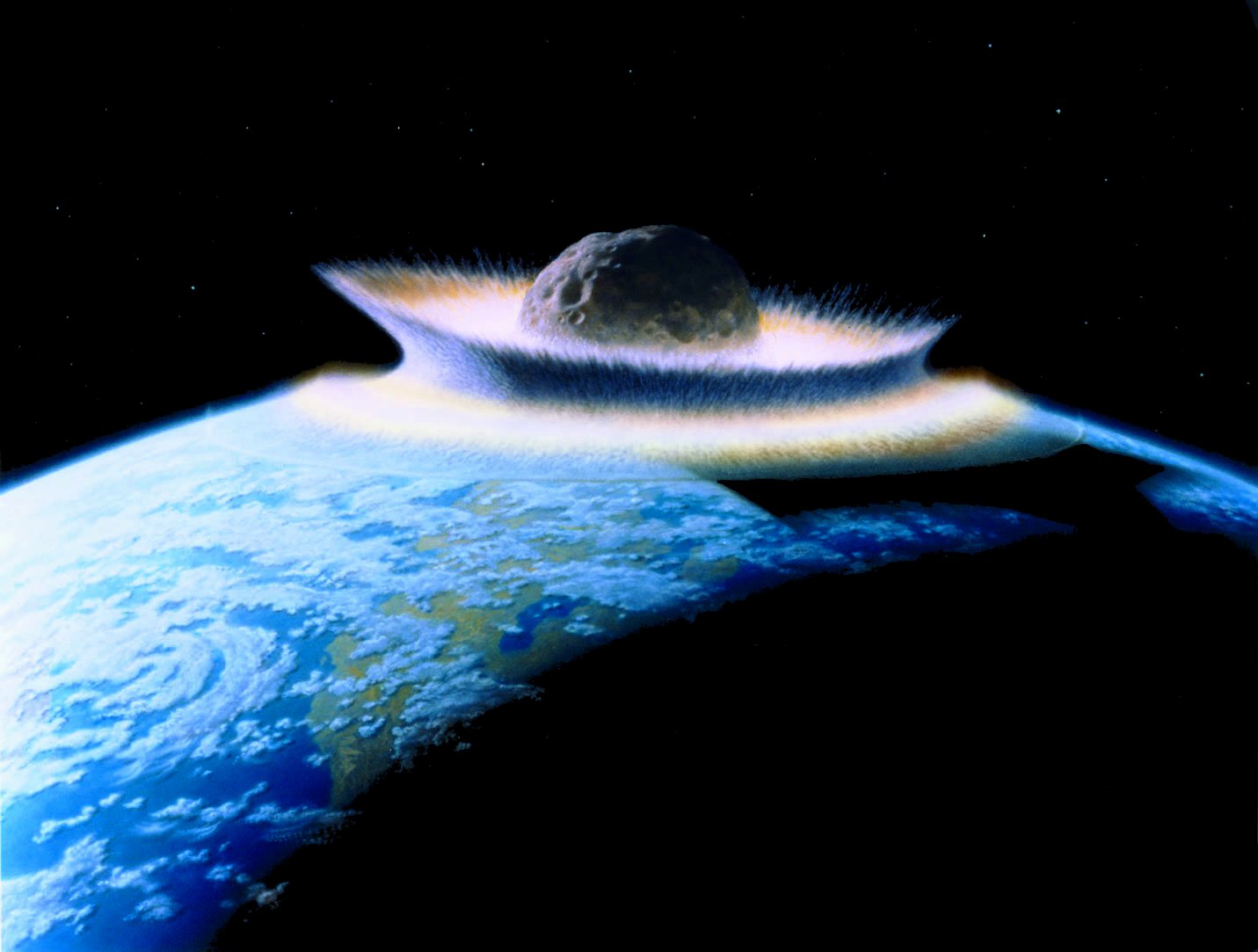

This isn’t something new. It is hard to know what share of species goes extinct each year, as we don’t even know how many species there are on our wonderful planet. Science’s best estimates are that we lose roughly 0.01% to 0.1% of our species annually, which doesn’t seem that drastic at first, but actually translates to an estimated 10,000 to 100,000 species per year. 100,000! Never should the highlight of a year be a picture of Kim Kardashian on a magazine rather than the incredible amount of animals that died off in that year. In fact, extinction rates are so high, 1,000% to 10,000% higher than past non-altered extinction rates, that many are calling this episode the sixth mass extinction of all time. Keep in mind that historically, a mass extinction has been an asteroid slamming into the Earth. That’s not something we as humans want to emulate, and yet, we are heading toward that magnitude of an event faster than ever, with no real plan to stop it. Of course, we aren’t there just yet. It is estimated that by mid-century 30% to 50% of all species on Earth will go extinct, rivaling both the Triassic/Jurassic extinction and the Late Devonian mass extinction, considered two of the five biggest mass extinctions of all time. We are clearly at a point that we have to start doing something; a third of all amphibians are already going extinct, at a rate of 25,000-45,000 times the background or normal extinction rate. Even primates, our closest relative on this planet, have 50% of their population at risk of extinction. We aren’t on a good path here.

I’m not writing this just to depress you, though. We do have some hope, if not a way to stop the ultimate demise of much of the life on earth. As much as I wish it weren’t true, there is most likely no economical way to halt the pace of the industrial growth that is compromising our natural resources and habitats. Based on persistent long-term trends, it appears that sooner or later we will all be living in an urban or suburb environment (or a dustbowl, if you are Matthew McConaughey), and the associated environmental damage will be very hard to reverse. But this doesn’t mean that the affected species can’t come back from the dead. Although we aren’t exactly there yet, with a failed try at bringing an extinct species bucardo (a type of Spanish Ibex) back to life ending in a deformed baby dying soon after birth, we are getting extremely close. We all know that Jurassic Park is a fiction, as even the more recent Woolly Mammoth is too far back in time to clone. But, there are plenty of recently extinct species that are well on their way to being cloned, such as the Hawaii Po’ouli bird and more. This may be commonplace in the future, the cloning and repopulation of dying species. Earth’s animals are precious and define our planet; we want to protect them from our destructive impact for as long as we can. This is the first part in a 4 part series on de-extinction, increasing extinction rates, conservation technology and more. Check back the next installment in the series soon!

Sources: http://wwf.panda.org/about_our_earth/biodiversity/biodiversity/ http://www.biologicaldiversity.org/programs/biodiversity/elements_of_biodiversity/extinction_crisis/ http://www.bbc.co.uk/nature/extinction_events http://ngm.nationalgeographic.com/2013/04/125-species-revival/zimmer-text http://www.nature.com/news/2011/110823/full/news.2011.498.html