-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

TOTW: Google's Project Ara Modular Phone May Be The Future Of SmartphonesOctober 30, 2014

TOTW: Google's Project Ara Modular Phone May Be The Future Of SmartphonesOctober 30, 2014 -

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

-

Posts tagged VR

Fighting A Robot In VR – Midas Touch Games’ BattleRig

08 years

It punched to the right. I blocked. Another punch, this time to the left. I blocked again. I quickly jabbed forward, knocking it back slightly. Regaining its balance, it took a step back, swinging its arms. I took the initiative, hitting it with a sharp uppercut to the jaw. Uppercuts seemed to have the best effect, I noticed. It went flying, landing head over heels. Relaxing, I took the HTC Vive virtual reality headset off. I had just demolished a large red battle robot in a one-v-one fight in virtual reality.

That was my experience with Midas Touch Games’ demo, BattleRig, at Augmented Reality World Expo in Silicon Valley. Midas Touch Games is a company that took on one of the largest challenges to VR yet: touchability, or the ability to manipulate and touch objects in virtual reality. It has taken long enough to make a VR headset capable of producing immersive games, videos, and experiences. But the whole idea of VR relies on immersiveness, and if you feel like you are in an unfamiliar physical world, it can make the experience lack credibility. If you touch an object, say, a book on a shelf, and it doesn’t react, that will temporarily take you out of the experience. In an ideal VR experience, everything you touch will react accordingly, therefore limiting the amount of times you actively think “Oh right, this isn’t real. I’m in virtual reality.”

While the ideal virtual reality experience is obviously still years away, Midas Touch Games and many other companies at AWE are helping to make that experience a reality. We have the headsets, we have the 3D models and 360-degree video, now we need to make that content interactive. And Midas Touch Games is doing just that. The one-on-one fight with a robot I was lucky enough to experience was just one example of their unique joint-based physics for VR. Joint-based physics have been in use in VR for inanimate objects for a while, but when it comes to more complex things like, say, robots, it just didn’t work. Midas Touch has created a joint-based system made especially for character-based simulations, what they call the Midas Physics Engine, a 3D engine for virtual reality characters.

While something called “Midas Physics Engine” may sound like PR jargon, it’s quite substantive. I’ve demoed a fair number of VR experiences, and while flying around a prehistoric landscape full of dinosaurs is stunning, the feeling that you are actually there goes away when you fly directly through a stegosaurus. But when you’re petting a dog, and it actually reacts to your touch, and you are even able to pick it up and push it around, as was the demo at the Midas Touch booth, the experience is much more immersive and memorable. And when you’re fighting a robot, when it’s reacting to your punches and vice versa, you really do feel like you’re there, and for me just simply interacting with the characters in your VR experience goes a long way for taking that next step towards the ideal, completely immersive VR experience.

The Promise Of Augmented Reality

08 years

Just two sessions into Augmented Reality World 2016, and the incredible feeling of excitement about the industry had already set in. In a talk entitled The Butterfly Dream: Smart Eyewear in 2031, Dan Eisenhardt discussed the future of AR and the Internet of Things, and it’s no surprise that at a AR/VR/IoT conference the perspective on these technologies is optimistic. Eisenhardt addressed a concern shared by many augmented reality skeptics: will people really wear things on their head all the time?

Basically, this entire conference is a testament to the fact that plenty of large corporations are saying yes. In his talk, Eisenhardt argued that, just as with the book, the wristwatch, and the smartphone, any technology that alters our intake of information, and especially those that alter our appearance even slightly, could suffer backlash. (yes, even books had some backlash, people saying kids were spending too much time inside reading). But just as with the books, the wristwatch, and the smartphone, the utility of these technologies ultimately overcomes these concerns. All the numbers have been pointing in that direction: incredible growth for the last 5 or so years, the colossal projected potential in the industry, and more. But aside from that, to zero in on AR in particular, the potential use-cases are mind-blowing. Walk into a clothing store and a large arrow appears in your vision, identifying clothes in your size? Within a few years, definitely. Texting, calling, searching, helpful information, all showing up in your vision in an unobtrusive and simple way? Almost already here.

While we’re still some distance from having AR glasses look identical to standard prescription glasses, we’re on our way. Even since last year, the glasses being showcased today are smaller, faster, lighter, and have higher-quality displays. The experiences you can already have with headsets like HoloLens and ODG R7 are quite amazing, and it’s only natural that by 2031, fifteen years from now, the best use-cases will have had plenty of time to become mainstream. Eisenhardt went as far as to say the question will not be “should I get AR glasses?” in 2031 but “when will I upgrade my current AR glasses?” In other words, AR will be as ubiquitous as smartphones are today – you can’t leave home without them. I can’t quite decide if that’s scary or exciting.

Sergey Brin, co-founder of Google, wearing the (for the most part) failed Google Glass, the first mainstream AR headset.

I’m a technological optimist; I feel that AR, VR, and other technologies will substantially change the world as we know it. And I expect to jump on the bandwagon as soon as the prices become more competitive. Nevertheless, the notion that our current reality might be replaced with one that is constantly augmented by computer-generated graphics is slightly unsettling. I like reality as it is, and while I trust AR can make our lives easier and more productive, just as smartphones have, I can’t help but wonder whether being completely plugged into an augmented, even completely simulated world with VR would take a certain realness out of life. A realness I enjoy.

Something about AR glasses, wearing computers on our faces, poses a large change to that lifestyle, that reality. Eisenhardt talked about how reality is subjective anyway, how my reality may be different from yours, how easy it is to alter our current perspective of reality, and how holding onto a non-augmented reality is rejecting a technology just because you want to protect something that didn’t really exist in the first place. If you’re fully immersed in a simulation, a VR/AR experience, such that you feel like you’re in another world, move around like you’re in another world, hear, smell, touch, and taste like you’re in another world, who’s to say you’re not in another world?

This is, of course, speculation. By 2031, I would expect AR to be at least something you use on a weekly basis, if not daily. By then, AR would be no different than opening up a laptop, checking your smartphone, or even reading a book. The idea of our current, unaltered, unaugmented reality is slowly being broken down, each new technological advances making our reality seem more and more mutable. AR is very exciting; I’ve had the chance to demo some pretty incredible experiences here at AWE 2016, from beating up a robot and petting a dog with Midas Touch Games, to making music with Subpac, to exploring a prehistoric world of dinosaurs with LifeLiQe, all in AR and VR. And while the novelty of these experiences will soon fade away, they will be replaced by very practical and helpful uses of the technology. While it may be scary, and certainly is exciting, there’s no doubt AR will be a large player in the future of entertainment, enterprise, and more.

Augmented Vs. Virtual Part 2 – Augmented Reality

09 years

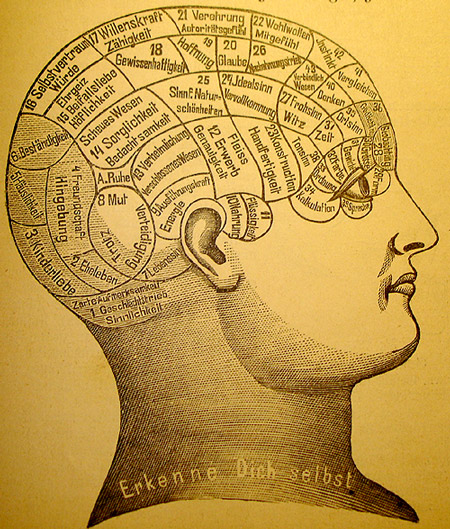

Reality is very personalized, it is how we perceive the world around us, and it shapes our existence. And while individual experiences vary widely, for as long as humans have existed, the nature of our realities have been broadly relatable from person to person. My reality is, for the most part, at least explainable in terms of your reality. Yet as technology grows better and more widespread, we are coming closer to an era where my reality, at least for a period of time, may be completely inexplicable in the terms of your reality. There are two main ways to do this: virtual reality and augmented reality. In virtual reality, technology immerses you in a different, separate world. My earlier article on VR was the first of this two-part series, and can be found HERE.

Whereas virtual reality aims to totally replace our reality in a new vision, augmented reality does what the name suggests: it augments, changes, or adds on to our current, natural reality. This can be done in a wide variety of ways, the most popular currently being a close-to-eye translucent screen with projected graphics on top of what you are seeing. This screen can take up your whole field of view, or just in the corner of your vision. Usually, the graphics or words displayed on the screen is not completely opaque, since it would then be blocking your view of your real reality. Augmented reality is intrinsically designed to work in tandem with your current reality, while VR dispenses it in favor of a new one.

With this more conservative approach, augmented reality (AR) likely has greater near-term potential. For VR, creating a new world to inhabit limits many of your possibilities to the realm of entertainment and education. AR, however, has a practically unlimited range of use cases, from gaming to IT to cooking to, well, pretty much any activity. Augmented reality is not limited to, but for now works best as a portable heads-up display, a display that shows helpful situational information. For instance, there was a demo at Epson’s booth at Augmented World Expo 2015 where you got to experience a driving assistance app for AR. In my opinion, the hardware held back the software in that case, as the small field of view was distracting and the glasses were bulky, but you could tell the idea has some potential. At AWE, industrial use cases as well as consumer use cases were also prominently displayed, which included instructional IT assistance, such as remotely assisted repair (e.g., in a power plant, using remote visuals and audio to help fix a broken part).

Before I go on, I have to mention one product: Google Glass. No AR article is complete without mentioning the Google product, the first AR product to make a splash in the popular media. Yet not long after Google Glass was released, it started faded out of the public’s eye. Obvious reasons included the high price, the very odd look, and the social novelty: people couldn’t think of ways they would use it. Plus, with the many legal and trust issues that went along with using the device, it often just didn’t seem worth the trouble. Yet rumor has it that Google is working on a new, upgraded version of the device, and it may make a comeback, but in my opinion it’s too socially intrusive and new to gain significant near-term social traction.

Although many new AR headsets are in the works (most importantly Microsoft’s HoloLens), the development pace is lagging VR, which is already to the stage where developers are focused on enhancing current design models, as I discussed in the previous VR article. For AR, the situation is slightly different. Hardware developers still have to figure out how to create a cheap AR headset, but a headset that also has a full field of view, is relatively small, doesn’t obstruct your view when not in use, and other complications like that. In other words, the hardware of AR occasionally interrupts the consumption of AR content, while for VR hardware, the technology is well on its way to overcoming that particular obstacle.

Beyond these near-term obstacles, if we want to get really speculative, there could be a time when VR will surpass AR even in pure utility. This could occur when we are able to create a whole world, or many worlds, to be experienced in VR, and we decide that we like these worlds better. When the immersion becomes advanced enough to pass for reality, that’s when we will abandon AR, or at least over time use it less and less. Science fiction has pondered this idea, and from what I’ve read, most stories go along the lines of people just spending most of their time in the virtual world and sidelining reality. The possibilities are endless in a world made completely from the fabric of our imagination, whereas in our current reality we have a lot of restrictions to what we can do and achieve. Most likely, this will be in a long, long time, so we have nothing to worry about for now.

Altogether, augmented reality and virtual reality both are innovative and exciting technologies and that have tremendous potential to be useful. On one side, AR will be most likely used more than VR in the coming years for practical purposes, since it’s grounded in reality. On the other hand, VR will be mostly used for entertainment, until we hit a situation like what I mentioned above. It’s hard to pit these two technologies against each other, since they both have their pros and cons, and it really just depends on which tech sounds most exciting to you. Nonetheless, both AR and VR are worth lots of attention and hype, as they will both surely change our world forever, for better or worse.

Mindride’s Airflow Can Make You Fly – Well, Virtually

09 years

Humans can’t fly without technological assistance, but that hasn’t stopped us from building planes, helicopters, wingsuits, and more. Flying shows up in mediums ranging from comic books to myths and fairy tales to cultural folklore. From Icarus to Superman, humans have desired to fly. But as technology has advanced, watching people fly hasn’t satisfied us; now we want to feel like we truly are flying, and in this respect technology is beginning to grant our wish, through Virtual Reality devices.

This morning, at the Augmented World Expo in Santa Clara, California, I got the opportunity to fly. In a unique booth at the Expo, a company called Mindride offered an experience, Airflow, that involved strapping myself into a harness, donning headphones and an Oculus Rift, and then flying Superman-style through a virtual Alps-like landscape. How could I say no? And so, after 5 minutes of harnessing and calibration, I was flung into this mountainous world, floating thousands of feet above the “ground.” Under me were mountains, some snow-capped, others green. Around me, randomly scattered in the sky, were big pink spheres. The objective of this experience was to steer yourself towards these spheres, trying not to flinch as you run right into them, and pop as many as possible. I have to say, I think I did pretty well, but the larger point is that current generation VR technology is enabling experiences that really can begin to replicate those that humans have dreamt of for centuries.

The booth was set up pretty unusually. With a desk off to the side, the majority of the space was taken up by this “ride”. Consisting of a couple of beams with straps, harnesses, and cords running everywhere, the infrastructure was pretty impressive but not exactly family room-ready. Before you got to experience the flying, you had to put sensors on each arm that track where you are pointing your arm in relation to your body. Once strapped in, I was hanging horizontally, with the computers gauging whether I was holding my arms straight back in boost mode, left arm out to go left and right arm out to go right, or both arms dangling to hover in place. On my head was an Oculus Rift running Airflow’s custom software. To add effect, there are two fans blowing air in your face, which vary how much air they blow based on your flight speed.

Overall, the experience was surreal. Once you are strapped in and flying, wind in your face, you easily forget your immediate surroundings, which in my case included a gaggle of tech entrepreneurs demoing their products. The immersion was astounding, andMindride did a great job making the experience more than a run-of-the-mill VR game. Of course, as it is with new technologies, there are clear hints that you aren’t truly flying across in amountain-filled world chasing pink bubbles. The occasional background noise interfered with the experience, as did my tendency to shift focus from the screen-wide image to pixel-level details. But again, as technology advances, these subtle distractions will be minimized; in fact, some solutions to the issues I had were even displayed Expo. As experiences like these gradually become more common in places like malls, theme parks, and even in our own homes, we will start to see a blending of reality, as we’ve always know it, and virtual reality – a reality in which anything is possible. It’s hard to doubt the demand for that.